You need to choose the approach that best matches your needs.

Android play sounds android#

If you want to play audio in your Android apps, there are three APIs to choose from. USB Audio is included in Android 5.0+ (API 21), but is not yet supported by most devices.OpenSL supported was added on Android 2.3+.The most recent significant improvements include Android audio latency has seen improvements but still lags other platforms. Audio latency has been one of the most annoying issues on the entire platform.Īndroid developers are second-class citizens when it comes to audio latency on our mobile platform. No discussion on audio would be complete without talking about latency. Following figure shows the various classes and APIs you can employ to accomplish common audio tasks. The low-level APIs AudioTrack and AudioRecord are excellent when you need low-level control over playing and recording audio.Īs with most of the functions available on the Android platform, there is almost always more than one way to accomplish a given task. The high-level APIs MediaPlayer, MediaRecorder and SoundPool are very useful and easy to use when you need to play and record audio without the need for low level controls. Reads bytes from the encoded data whether it is an online stream, embedded resources, or local files. MediaExtractor facilitates extraction of demuxed, typically encoded, media data from a data source. The implementation of this API is likely to stream audio to remote servers to perform speech recognition. This class provides access to the speech recognition service. The constructor for the TextToSpeech class, using the default TTS engine. Synthesizes speech from text for immediate playback or to create a sound file. The AudioFormat class is used to access a number of audio formats and channel configuration constants that can be used in AudioTrack and AudioRecord. SoundPool uses the MediaPlayer service to decode the audio into a raw 16-bit PCM stream and play the sound with very low latency, helping the CPU decompression effort. It is part of the Android low-level multimedia support infrastructure (normally used together with MediaExtractor, MediaSync, MediaMixer, MediaCrypto, MediaDrm, Image, Surface, and AudioTrack). MediaCodec class can be used to access low-level media codecs, such as encoder/decoder components. The format of the media data is specified as string/value pairs.

MediaFormat is useful to read encoded files and every detail that is connected to the content. The media provider contains metadata for all available media on both internal and external storage devices. Generally better to use Audio Record for more flexibility. The recording control is based on a simple state machine. High-level API used to record audio and video. Playback control of audio/video files and streams is managed as a state machine. MediaPlayer class can be used to control playback of audio/video files and streams.

Can set rate, quality, encoding, channel config.ĪudioManager provides access to volume and ringer mode control. Manages the audio resources for Java applications to record audio from the hardware by "pulling" (reading) the data from the AudioRecord object. Streaming/decoding of PCM audio buffers to the audio sink for playback by "pushing" the data to the AudioTrack object. Manages and plays a single audio resource for Java applications. Low-level API, not meant to be real time. Others have been added into Android more recently. As you can see, many of these APIs have been around since the beginning of Android with API of level 1.

Android play sounds for android#

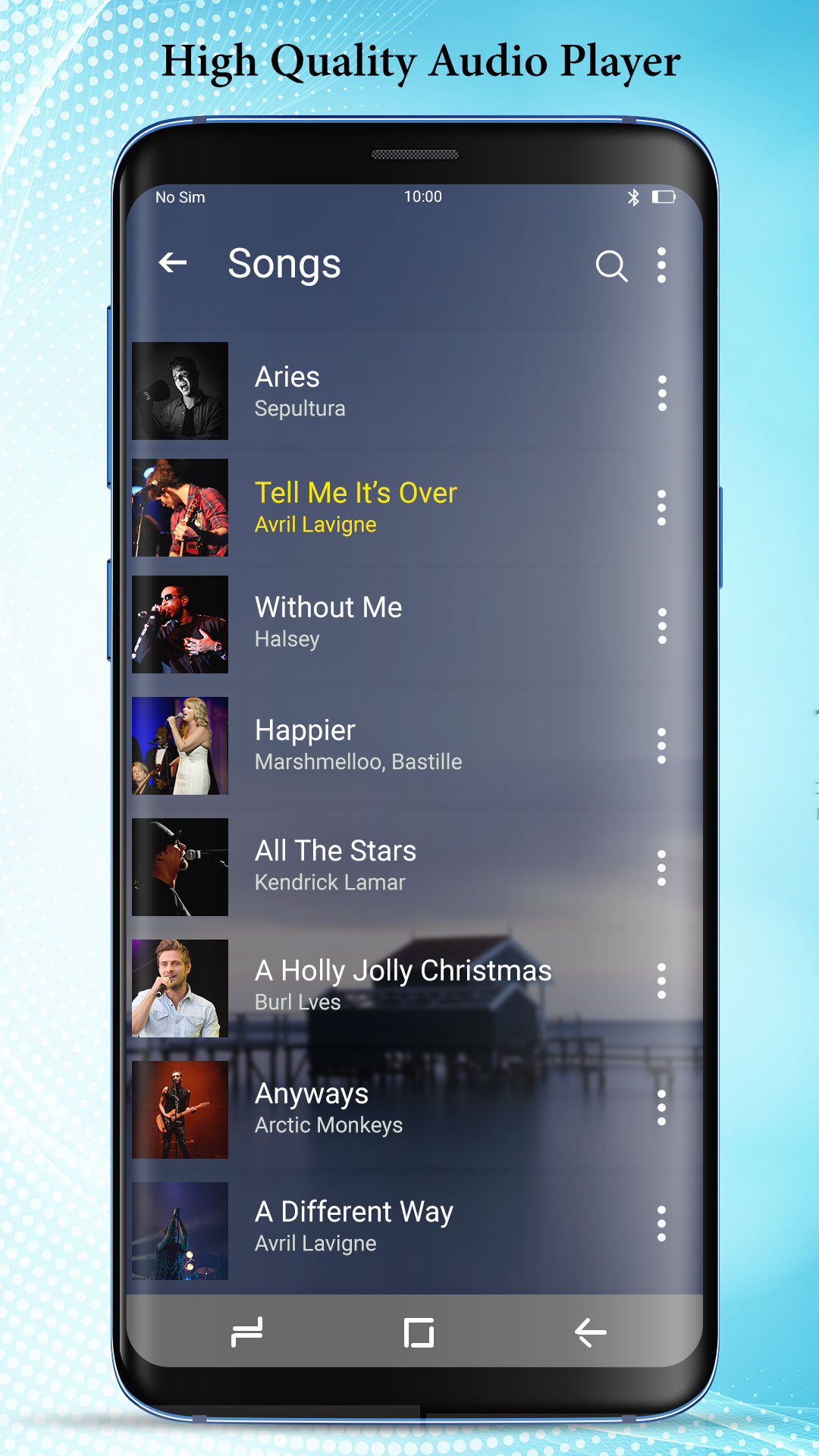

Following table summarizes the classes and APIs for Android audio.

It can get confusing with all of the audio APIs and classes in Android. N indicates encoding is not available for a codec.Y indicates encoding or decoding is available (all SDK versions) for a codec.